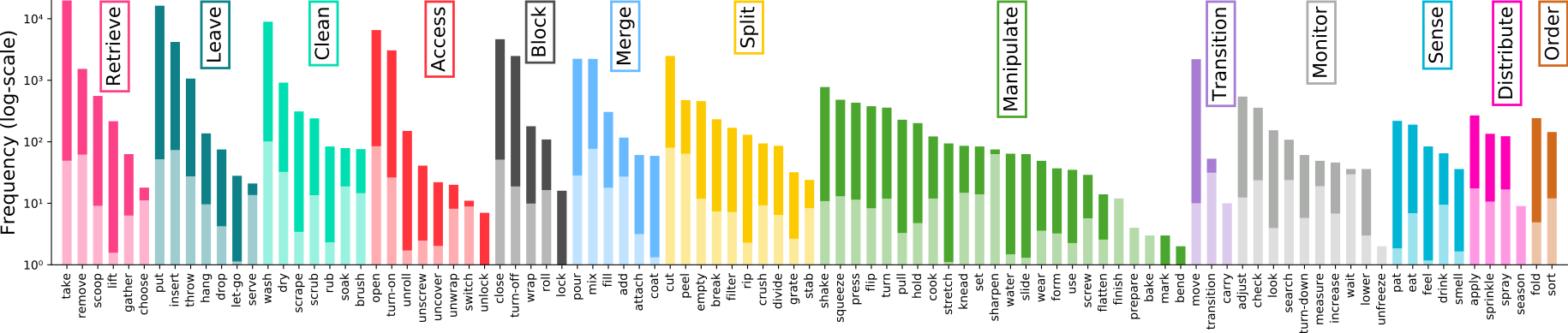

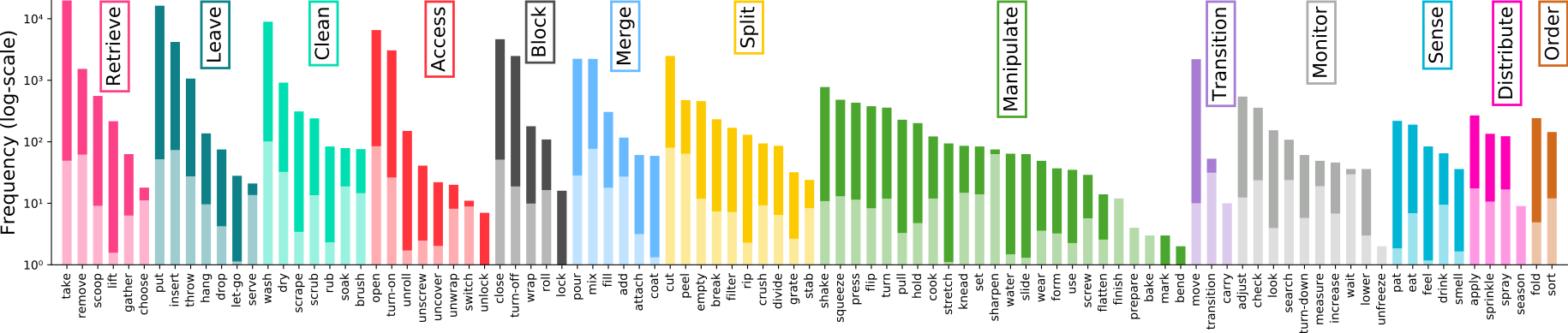

EPIC-KITCHENS-100 Stats and Figures

Some graphical representations of our dataset and annotations

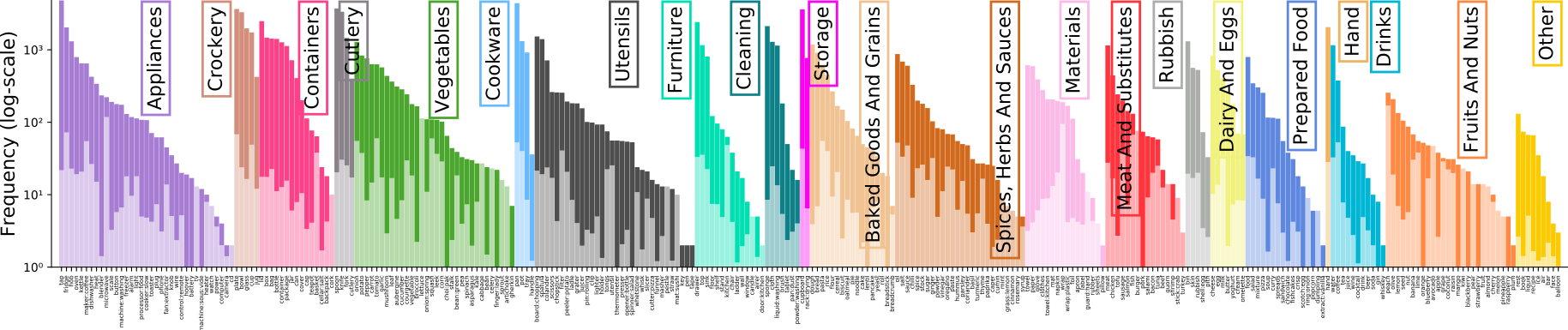

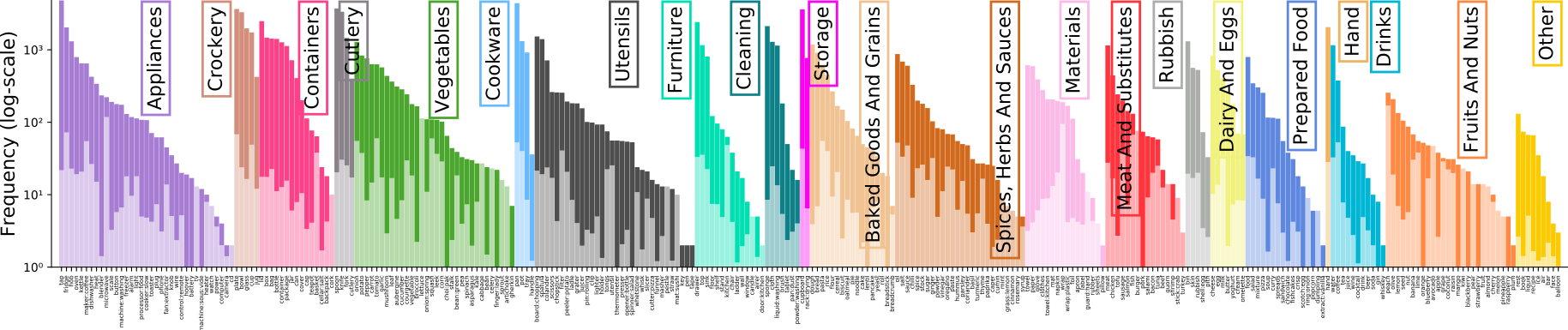

Annotation Pipeline

The large-scale dataset in first-person (egocentric) vision; multi-faceted, audio-visual, non-scripted recordings in native environments - i.e. the wearers' homes, capturing all daily activities in the kitchen over multiple days. Annotations are collected using a novel 'Pause-and-Talk' narration interface.

Erratum [Important]: We have recently detected an error in our pre-extracted RGB and Optical flow frames for two videos in our dataset. This does not affect the videos themselves or any of the annotations in this github. However, if you've been using our pre-extracted frames, you can fix the error at your end by following the instructions in this link.

Extended Sequences (+RGB Frames, Flow Frames, Gyroscope + accelerometer data): Available at Data.Bris servers (740GB zipped) or via Academic Torrents

Original Sequences (+RGB and Flow Frames): Available at Data.Bris servers (1.1TB zipped) or via Academic Torrents

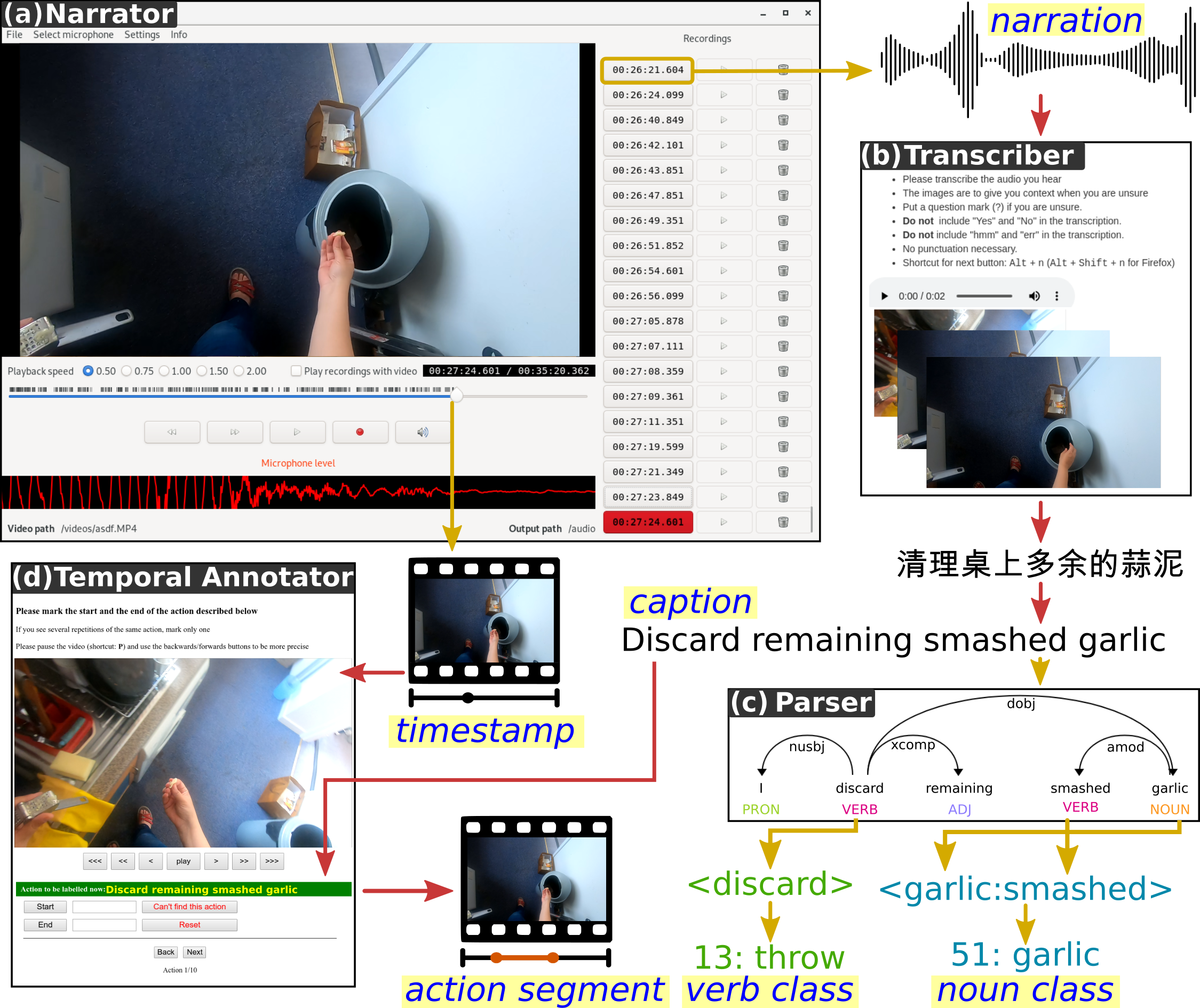

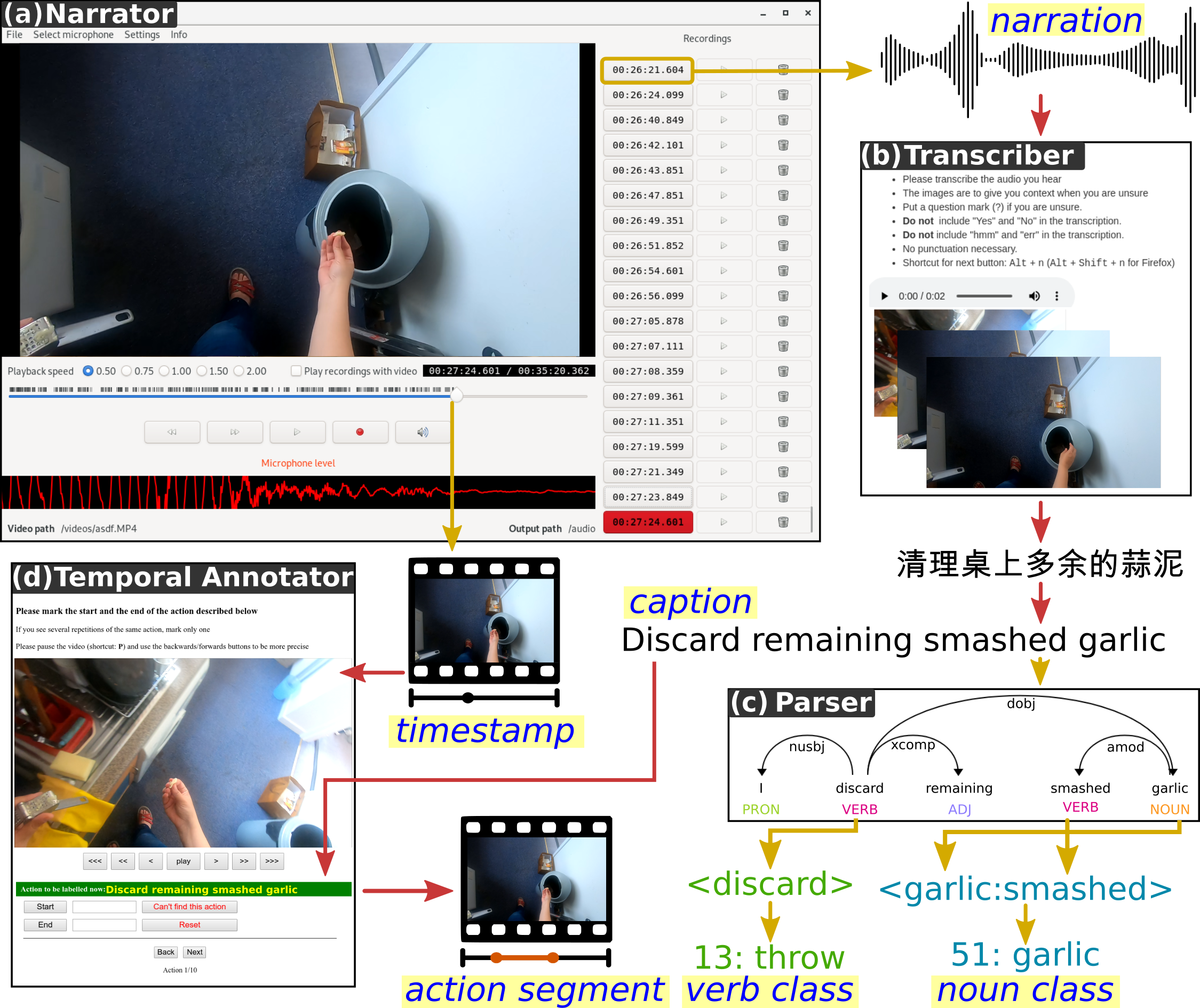

Automatic annotations (masks, hands and objects): Available for download at Data.Bris server (10 GB). We also have two Repos that will allow you to visualise and utilise these automatic annotations for hand-objects as well as masks.

We also offer a python script to download various parts of the dataset

All annotations (Train/Val/Test) for all challenges are available at EPIC-KITCHENS-100-annotations repo

Code to visualise and utilise automatic annotations is available for both object masks and hand-object detections.

The EPIC Narrator, used to collect narrations for EPIC-KITCHENS-100 is open-sourced at EPIC-Narrator repo

Cite our IJCV paper (Open Access 2021 - Published 2022): PDF or Arxiv:

@ARTICLE{Damen2022RESCALING,

title={Rescaling Egocentric Vision: Collection, Pipeline and Challenges for EPIC-KITCHENS-100},

author={Damen, Dima and Doughty, Hazel and Farinella, Giovanni Maria and Furnari, Antonino

and Ma, Jian and Kazakos, Evangelos and Moltisanti, Davide and Munro, Jonathan

and Perrett, Toby and Price, Will and Wray, Michael},

journal = {International Journal of Computer Vision (IJCV)},

year = {2022},

volume = {130},

pages = {33–55},

Url = {https://doi.org/10.1007/s11263-021-01531-2}

} Additionally, cite the original paper (available now on Arxiv and the CVF):

@INPROCEEDINGS{Damen2018EPICKITCHENS,

title={Scaling Egocentric Vision: The EPIC-KITCHENS Dataset},

author={Damen, Dima and Doughty, Hazel and Farinella, Giovanni Maria and Fidler, Sanja and

Furnari, Antonino and Kazakos, Evangelos and Moltisanti, Davide and Munro, Jonathan

and Perrett, Toby and Price, Will and Wray, Michael},

booktitle={European Conference on Computer Vision (ECCV)},

year={2018}

} An extended journal paper is avaliable at: (available now on IEEE and a preprint on Arxiv and ):

@ARTICLE{Damen2021PAMI,

title={The EPIC-KITCHENS Dataset: Collection, Challenges and Baselines},

author={Damen, Dima and Doughty, Hazel and Farinella, Giovanni Maria and Fidler, Sanja and

Furnari, Antonino and Kazakos, Evangelos and Moltisanti, Davide and Munro, Jonathan

and Perrett, Toby and Price, Will and Wray, Michael},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI)},

year={2021},

volume={43},

number={11},

pages={4125-4141},

doi={10.1109/TPAMI.2020.2991965}

} EPIC-KITCHENS-55 and EPIC-KITCHENS-100 were collected as a tool for research in computer vision. The dataset may have unintended biases (including those of a societal, gender or racial nature).

All datasets and benchmarks on this page are copyright by us and published under the Creative Commons Attribution-NonCommercial 4.0 International License. This means that you must give appropriate credit, provide a link to the license, and indicate if changes were made. You may do so in any reasonable manner, but not in any way that suggests the licensor endorses you or your use. You may not use the material for commercial purposes.

For commercial licenses of EPIC-KITCHENS and any of its annotations, email us at uob-epic-kitchens@bristol.ac.uk

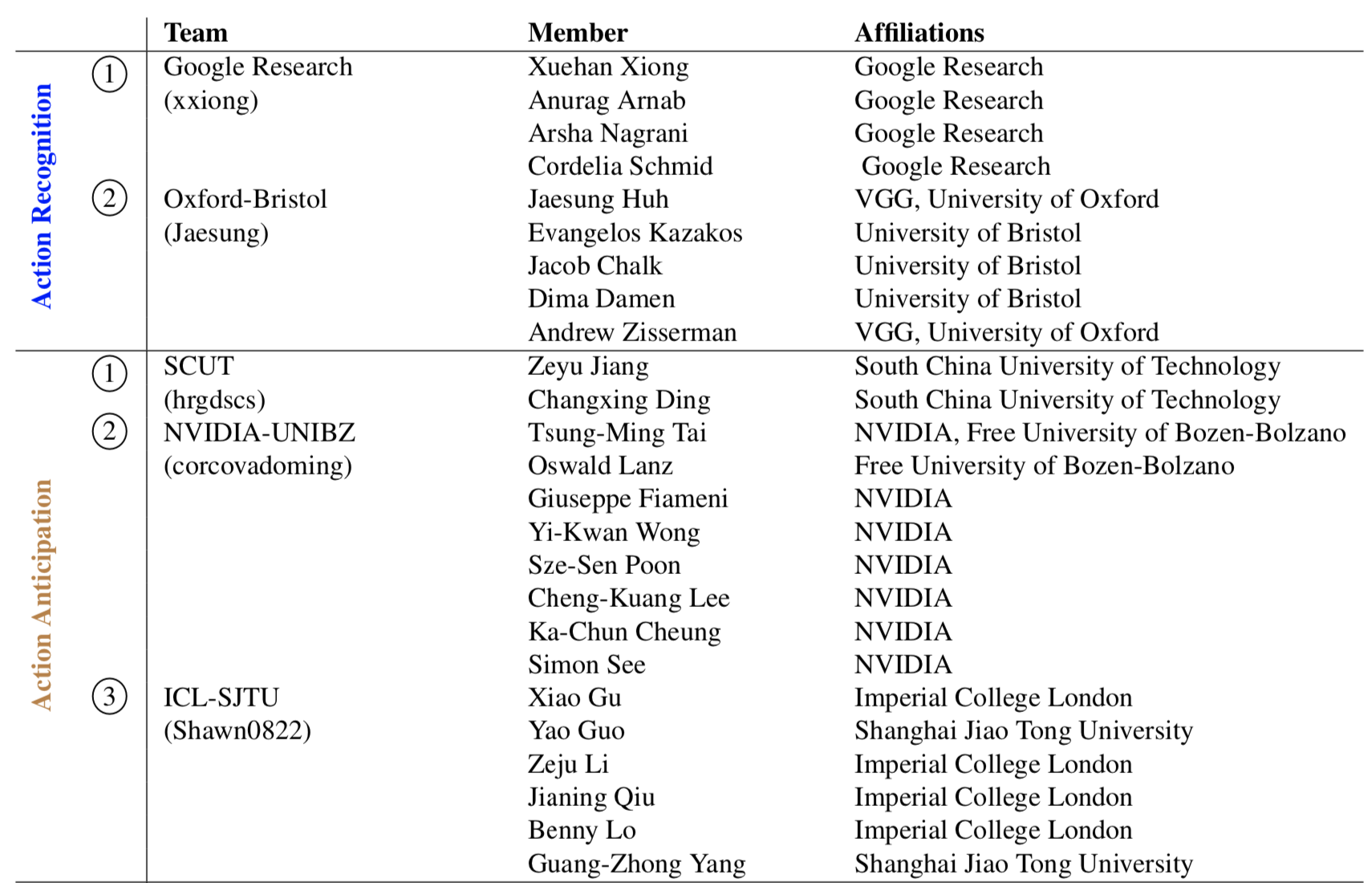

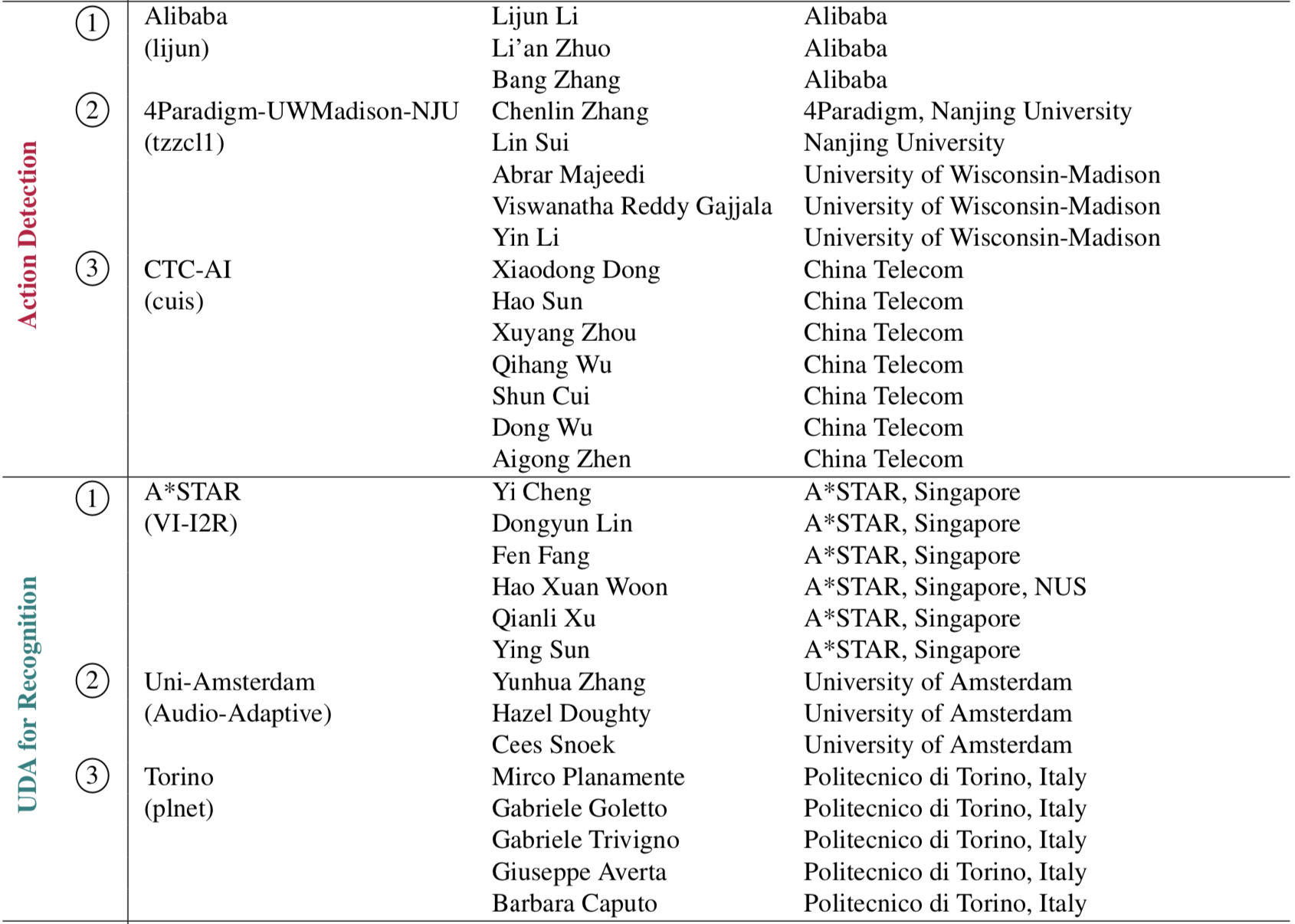

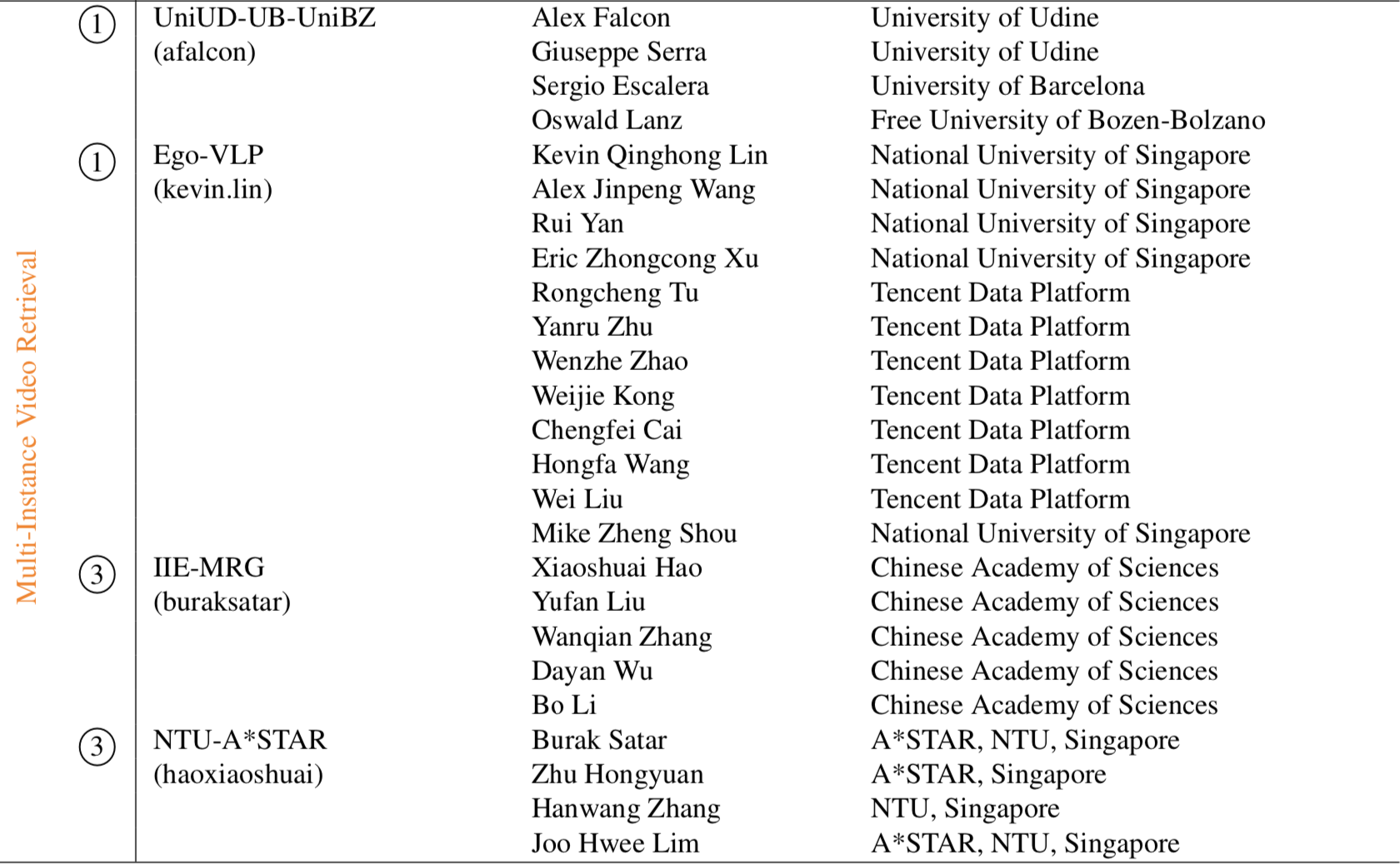

The five leaderboards are available for testing. This is not a formal challenge. Please revisit the website next January for 2023 challenge guideilnes. The CodaLab server pages detail submission format and evaluation metrics.

To submit to any of the five competitions, you need to register an account for that challenge using a valid institute (university/company) email address and fill this form with your team's details. A single registration per research team is allowed. We perform a manual check for each submission, and expect to accept registrations within 2 working days.

For all challenges the maximum submissions per day is limited to 1, and the overall maximum number of submissions per team is limited to 50 overall, submitted once a day. This includes any failed submissions due to formats - please do not contact us to ask for increasing this limit.

To submit your results, follow the JSON submission format, upload your results and give time for the evaluation to complete (in the order of several minutes). Note our new rules on declaring the supervision level, given our proposed scale, for each submission. After the evaluation is complete, the results automatically appear on the public leaderboards but you are allowed to withdraw these at any point in time.

Splits. The dataset is split in train/validation/test sets, with a ratio of roughly 75/10/15.

The action recognition, detection and anticipation challenges use all the splits.

The unsupservised domain adaptation and action retrieval challenges use different splits as detailed below.

You can download all the necessary annotations here.

You can find more details about the splits in our paper.

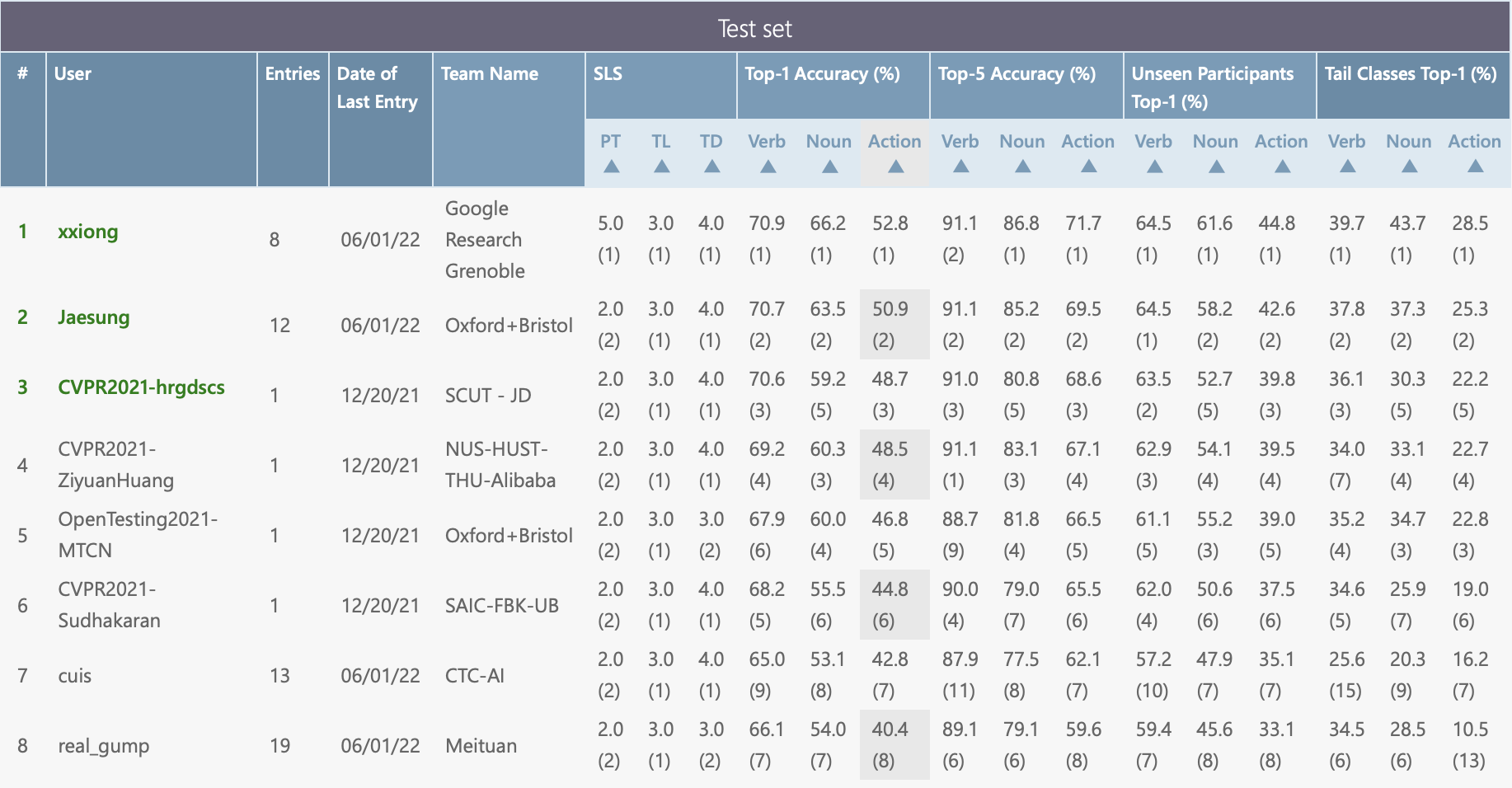

Evaluation. All challenges are evaluated considering all segments in the Test split.

The action recognition and anticipation challenges are additionally evaluated considering unseen participants and tail classes. These are automatically evaluated in the scripts and you do not need to do anything specific to report these.

Unseen participants. The validation and test sets contain participants that are not present in the train set.

There are 2 unseen participants in the validation set, and another 3 participants in the test set.

The corresponding action segments are 1,065 and 4,110 respectively.

Tail classes. These are the set of smallest classes whose instances account for 20% of the total number of instances in

training. A tail action class contains either a tail verb class or a tail noun class.

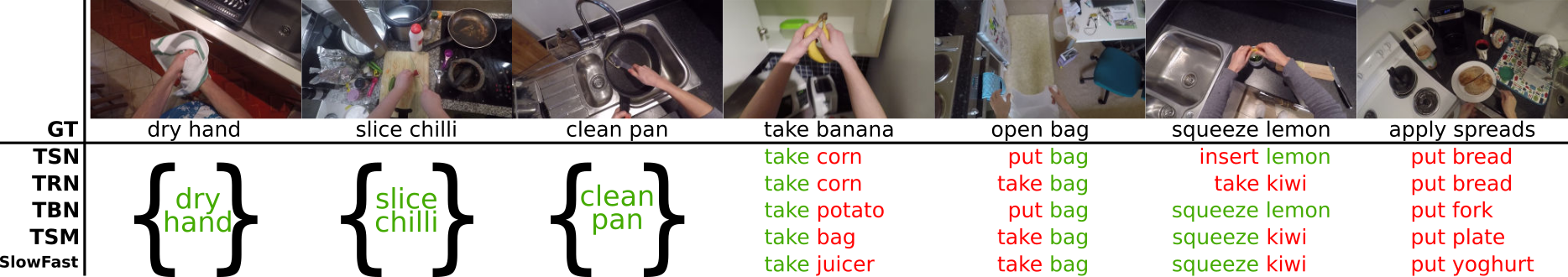

Task.

Assign a (verb, noun) label to a trimmed segment.

Training input (strong supervision). A set of trimmed action segments, each annotated with a (verb, noun) label.

Training input (weak supervision). A set of untrimmed videos, each annotated with a list of (timestamp, verb, noun) labels.

Note that for each action you are given a single, roughly aligned timestamp, i.e. one timestamp located

around the action. Timestamps may be located over background frames or frames belonging to another action.

Testing input. A set of trimmed unlabelled action segments.

Splits. Train and validation for training, evaluated on the test split.

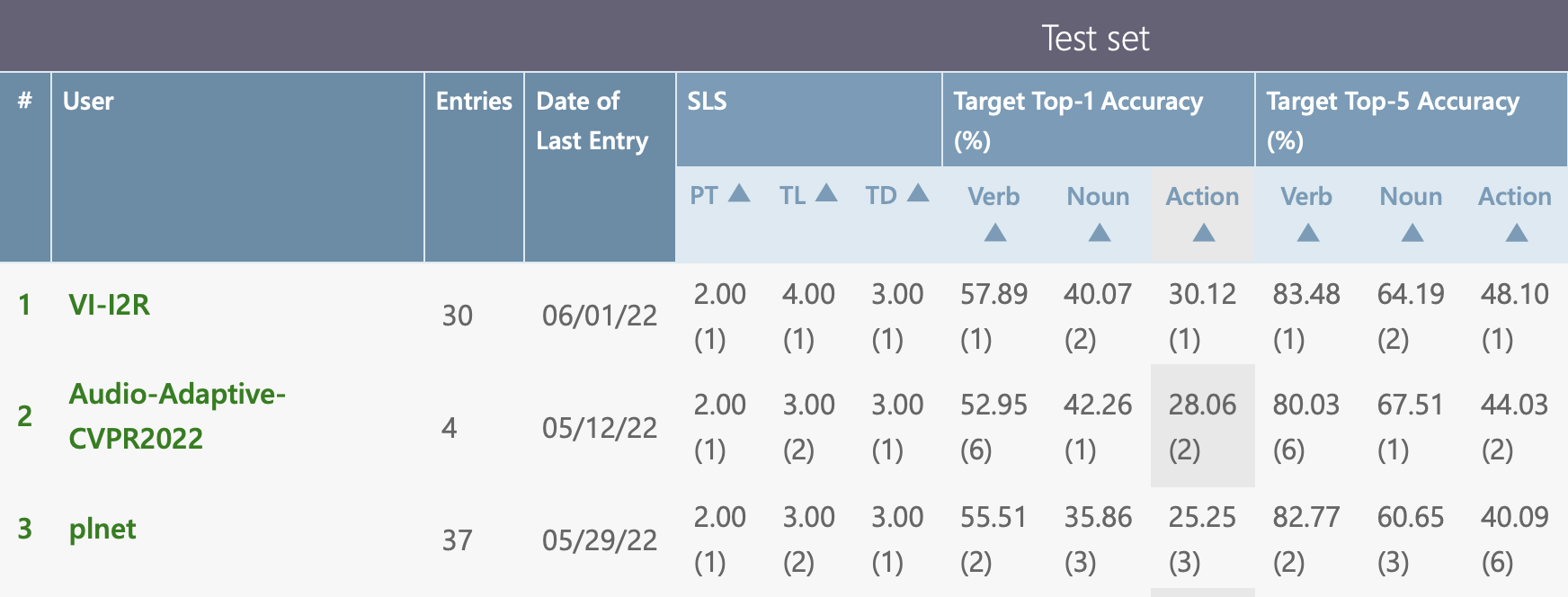

Evaluation metrics. Top-1/5 accuracy for verb, noun and action (verb+noun), calculated for all segments as well as

unseen participants and tail classes.

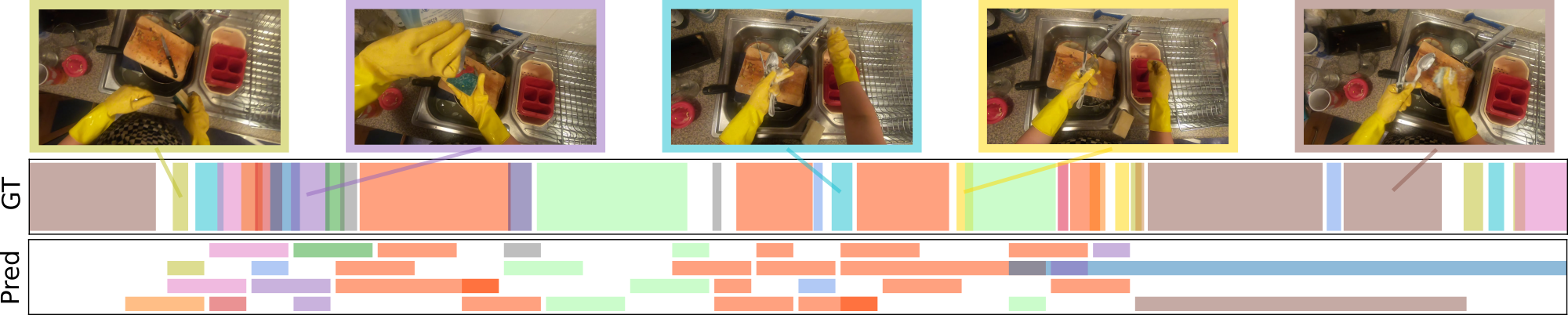

Task.

Detect the start and the end of each action in an untrimmed video. Assign a (verb, noun) label to each

detected segment.

Training input. A set of trimmed action segments, each annotated with a (verb, noun) label.

Testing input. A set of untrimmed videos. Important: You are not allowed to use the knowledge of trimmed segments in the test set when reporting for this challenge.

Splits. Train and validation for training, evaluated on the test split.

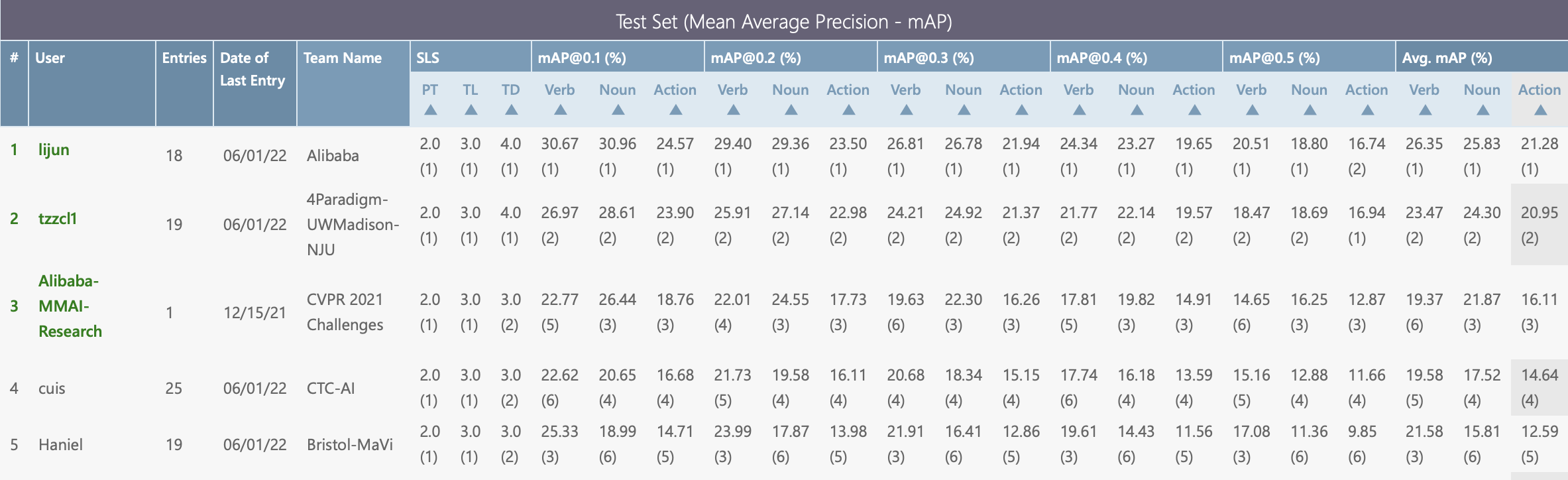

Evaluation metrics. Mean Average Precision (mAP) @ IOU 0.1 to 0.5.

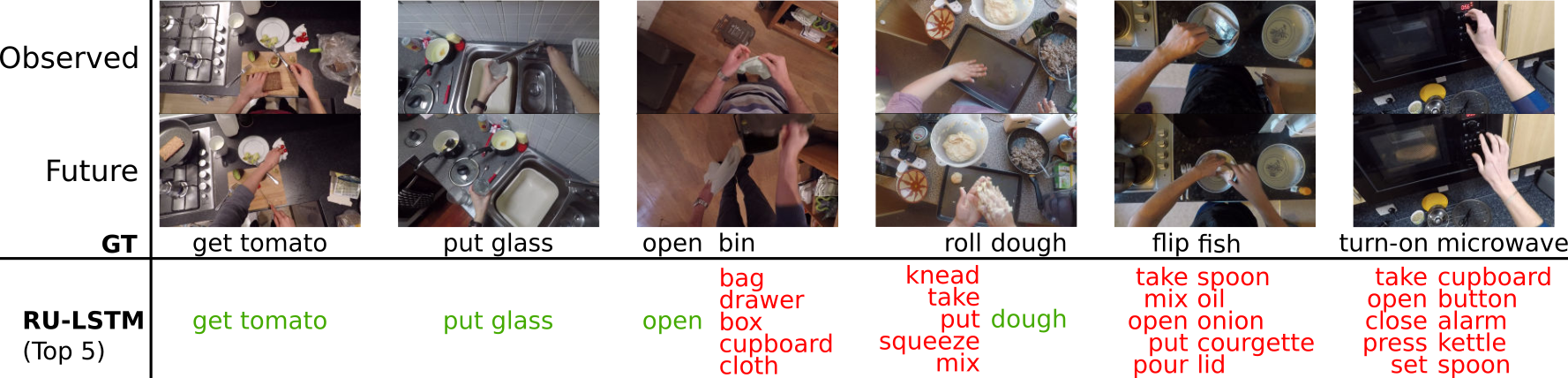

Task.

Predict the (verb, noun) label of a future action observing a segment preceding its occurrence.

Training input. A set of trimmed action segments, each annotated with a (verb, noun) label.

Testing input. During testing you are allowed to observe a segment that ends at least one second before

the start of the action you are testing on.

Splits. Train and validation for training, evaluated on the test split.

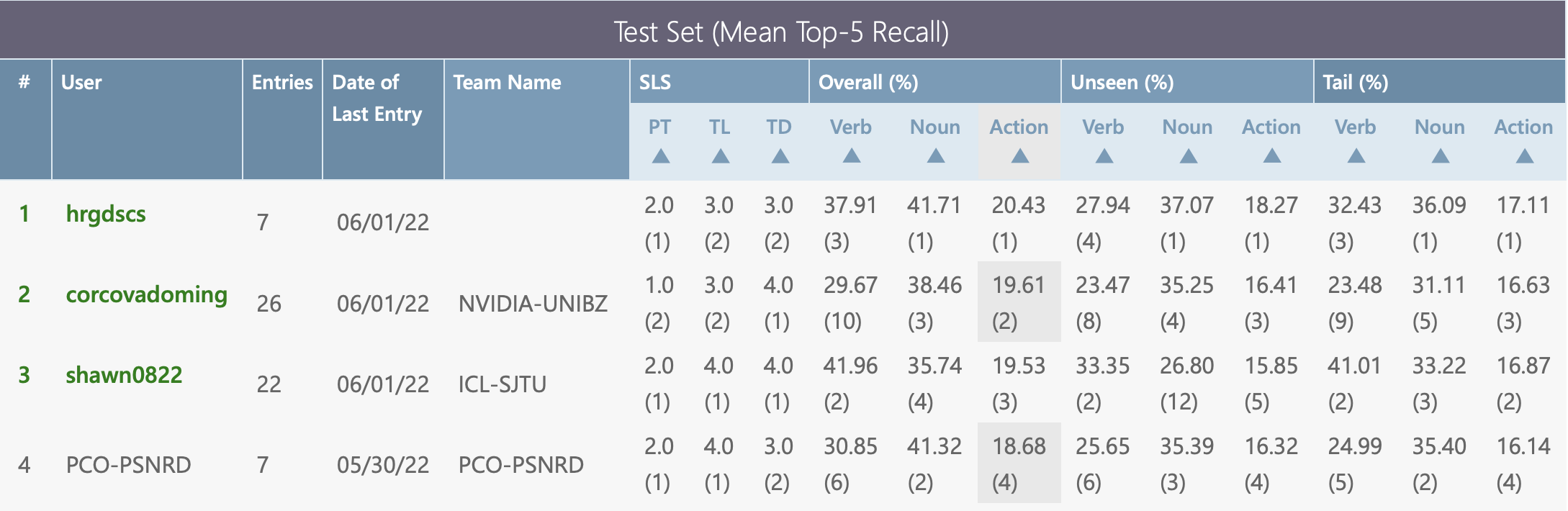

Evaluation metrics. Top-5 recall averaged for all classes, as defined here,

calculated for all segments as well as unseen participants and tail classes.

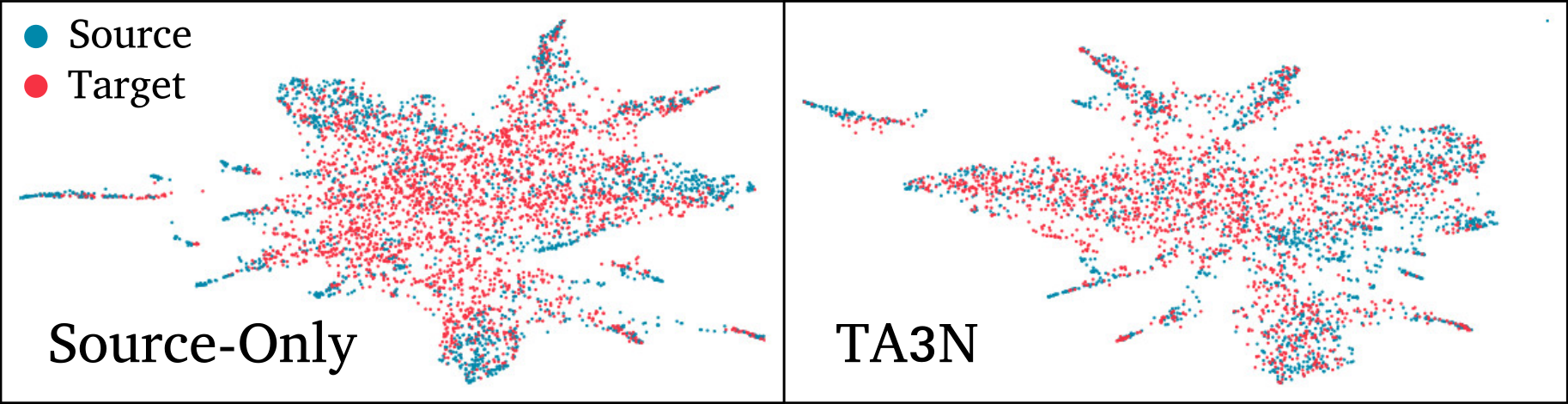

Task. Assign a (verb, noun) label to a trimmed segment, following the Unsupervised Domain Adaptation paradigm:

a labelled source domain is used for training, and the model needs to adapt to an unlabelled target domain.

Training input. A set of trimmed action segments, each annotated with a (verb, noun) label.

Testing input. A set of trimmed unlabelled action segments.

Splits. Videos recorded in 2018 (EPIC-KITCHENS-55) constitute the source domain,

while videos recorded for EPIC-KITCHENS-100's extension constitute the unlabelled target domain.

This challenge uses custom train/validation/test splits, which you can find

here.

Evaluation metrics. Top-1/5 accuracy for verb, noun and action (verb+noun), on the target test set.

Tasks. Video to text: given a query video segment, rank captions such that those with a higher rank are

more semantically relevant to the action in the query video segment.

Text to video: given a query caption, rank video segments such that those with a higher rank are more semantically relevant

to the query caption.

Training input. A set of trimmed action segments, each annotated with a caption.

Captions correspond to the narration in English from which the action segment was obtained.

Testing input. A set of trimmed action segments with captions. Important: You are not allowed to use the known correspondence in the Test set

Splits. This challenge has its own custom splits, available here.

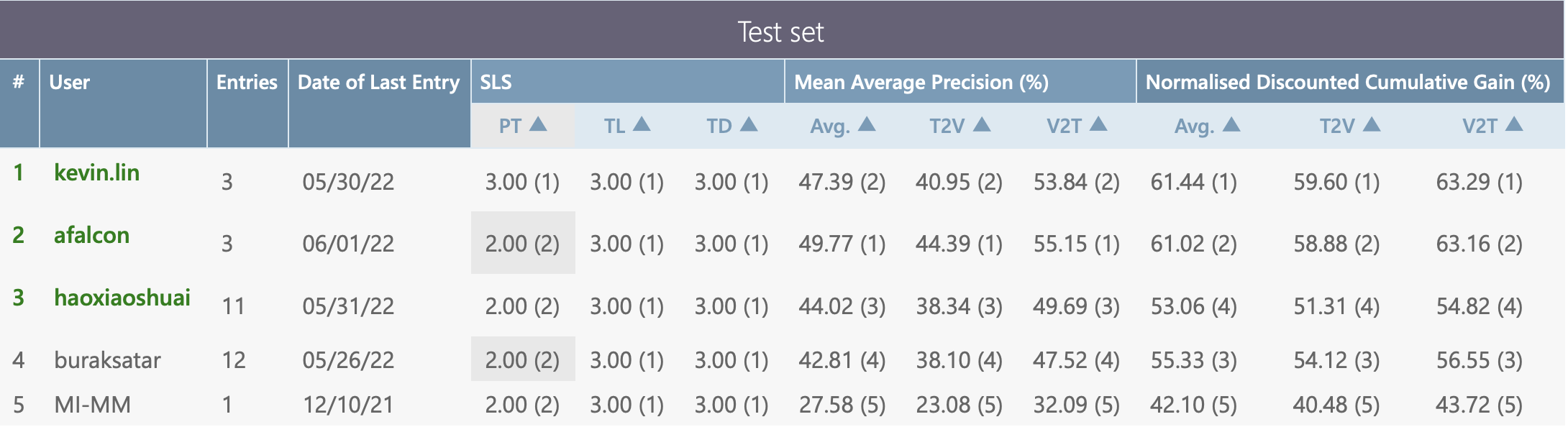

Evaluation metrics. normalised Discounted Cumulative Gain (nDCG) and Mean Average Precision (mAP).

You can find more details in our paper.

We are a group of researchers working in computer vision from the University of Bristol and University of Catania. The original dataset was collected in collaboration with Sanja Fidler, University of Toronto

The work on extending EPIC-KITCHENS was supported by the following research grants